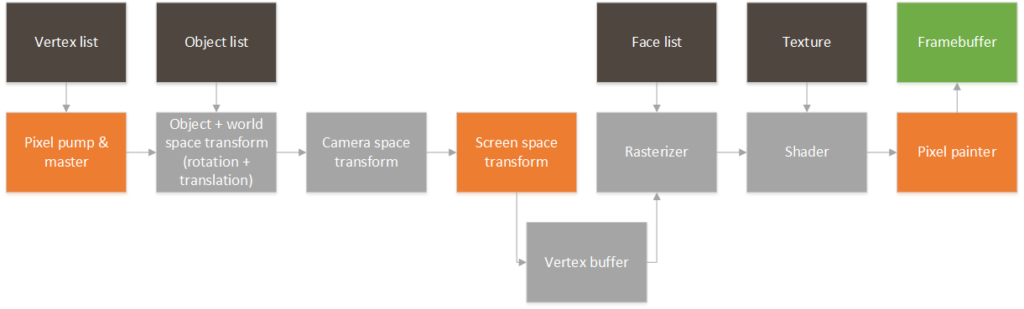

So, the time has finally arrived. Time to tackle the GPU in HW! So, a quick disclaimer: since this is a hobby project I will use HLS to quickly iterate designs and reach a functional RTL. All of the blocks will be designed considering that they are meant for RTL and (given enough time) could be replaced by hand coded VHDL/Verilog without too much hassle. This is the architecture that I am envisioning:

Each of the dark blocks is a BRAM, the green blocks are DDR memory regions, the orange blocks are IPs that I will actively implement and the grey blocks are blocks that I am planing on creating eventually. The idea is that we will start with a list of vertexes in space that correspond to the stored 3d representation of each object in the scene. These vertexes will be rotated and translated depending on each objects own position and rotation (object, world space transform). These points will then be shifted and rotated depending on the camera’s position and rotation (camera space transform). These points then correspond to the raw points that can be mapped to the 2d screen coordinates through a perspective proyection (screen space transform). Two things can happen now.

- We can chose to send those pixels directly to a pixel painter IP block. This pixel painter IP block just takes the stream of pixels and writes the data directly to the mapped framebuffer in DDR (which will be read by the VDMA we set up before). This is good enough for rendering point clouds which we will most certainly want to do first.

- We can store those pixel locations in a local BRAM to know the on-screen coordinates of the vertexes of the scene. This data can be pulled by a rasterizer IP block (that needs to know which set of vertexes belong to a face) to fill each face with a color (or generate U/V coordinates + texture information for each colored pixel). This info can be taken by a shader IP that uses the texture coordinates and a texture stored in a local BRAM to find the correct color of each textured pixel. Once this data is ready, it can be passed once again to the pixel painter IP for drawing on the framebuffer.

So, this is the general idea. Most likely I’m missing a few crucial steps. In my mind the most critical part that I’m currently missing is how to perform the rasterization so that pixels that are closer to the camera are drawn on top of overlapping pixels that are further away from the camera. This most likely requires a Z-buffer, but that would involve a DDR region to store the Z-buffer, and a mechanism to clear it when each frame starts. For now, I’ll start trying to draw faces that don’t overlap and figure out the overlapping problem later on.