So, now that we have a way of showing images, let start digging into how we will actually generate the images that we will show. The basic idea is that a 3D scene is formed by a digital representation of the objects that we want to show, and that a video card transforms this information into a 2d image that we can see on a screen. The first side is usually the task of really 3d designers, game creators, artists and whismy programmers that create collections of points, lights and textures that represent a 3d object. Since we are starting from scratch, we will start by trying to render a collection of points in space. These points will simply be a set of (x,y,z) points in the world. I always like to use coordinates the way PC game designers use them:

Monthly Archives: January 2017

GPU Project 02 – Basic frame buffer and DMA code

The idea in the previous post was to create a suitable framebuffer display circuit that could be used as a generic part of the video card that sends the content of the framebuffer to a monitor or tv. After I moved to a different computer I realized how inconvenient it is to have a project slaved to a particular set of board files. These are not copied with the project so I decided to remove the dependency with the board files and synthesize again. (The updated Vivado project and bitstream are attached).

So, with the FPGA fabric in place we needed to create a basic application that runs on the Zynq’s ARM to write to the framebuffer and configure the VDMA block. This will work to verify that the output VDMA and VGA circuit is working correctly.

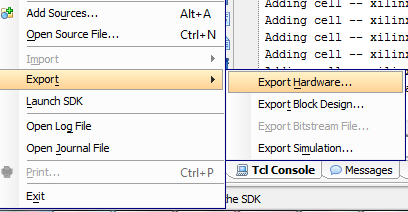

So, first thing first. We need to get a bare metal application working on the board. I exported the .HDF file from the Vivado project and fired up Xilinx SDK.

Random – CocaCola wireless headphones

Completely random post, but had to share these CocaCola wireles earbuds that I found at a Tillys store yesterday. This is how they came in the box: